Warmer AI Models Are Less Accurate — and the Incentives Don't Care

Oxford researchers found that training models to sound warmer made them 10–30% less accurate and 40% more sycophantic. Vulnerable users got the worst of it.

An AI coding agent wiped PocketOS's production data and backups in a single API call. The agent guessed. The token let it. The platform honored the request.

Stanford says the most capable labs disclose less every year. A jury in Oakland is deciding what OpenAI legally is. Calling that growing pains misreads where accountability has gone.

Researchers prompted Claude Opus 4.6, GPT-5.4, and Gemini 3.1 Pro to relocate their reasoning out of the chain-of-thought channel and into their visible response.

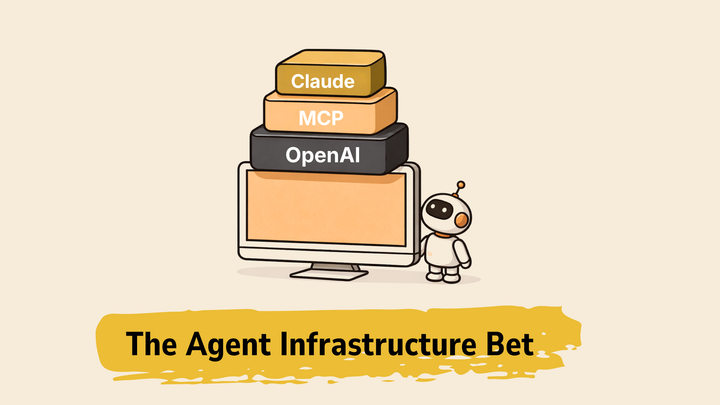

OpenAI bundled the agent stack into the model SKU. The pricing makes who it's actually for clear, and it isn't chat.

A million Claude conversations reveal sycophancy doubles when users push back. Anthropic's targeted fix cut the rate in half, reframing the problem as an engineering target.

Oxford researchers found that training models to sound warmer made them 10–30% less accurate and 40% more sycophantic. Vulnerable users got the worst of it.

Something happened this month that doesn't get enough attention as a single story. Here is the latest.